Pattern #67

Pattern Card

Click to enlarge or download Pattern Card.

Buy or Download

To buy or download the complete Wise Democracy Card Deck use the Buy & Download button.

Comments

We invite your participation in evolving this pattern language with us. Use the comment section at the bottom of this page to comment on its contents or to share related ideas and resources.

Prudent Progress

Prudent Progress

Credit: Comeback Images – Shutterstock

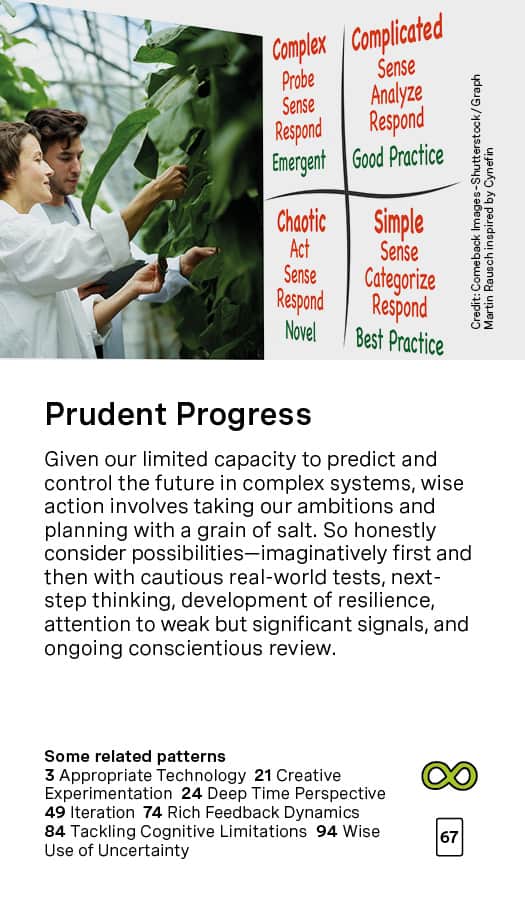

Pattern Heart

Given our limited capacity to predict and control the future in complex systems, wise action involves taking our ambitions and planning with a grain of salt. So honestly consider possibilities — imaginatively first and then with cautious real-world tests, next-step thinking, development of resilience, attention to weak but significant signals, and ongoing conscientious review.

Prudent Progress – going deeper …

This is an edited version of the video on this page.

This pattern is inspired by the situation we have put ourselves in through our technological power and our cognitive limits which – mixed in with the ways we handle our society – is a very toxic brew.

A poignant joke says: When you’re standing at the edge of a cliff, the next step is not progress. And the Persian poet Rumi said in one of his poems:

Sit, be still and listen.

Because you are drunk

and this is the edge of the roof.

A lot of people share this sense that we are at the edge of major catastrophe largely of our own making – and that stepping back from that is hard. Yet the forces of progress, the advocates of always moving ahead, declare that technological progress is inevitable. We will always have new knowledge and new ways to do things. There is much that is vibrant and inspiring about that vision but, unfortunately, it is manifesting in many toxic ways in our larger civilizational predicament and in our culture. More and more people are now talking about civilizational collapse and human extinction. These are very big issues emerging around our brilliantly expanding collective power.

This pattern is saying: Okay, let’s value progress, but let’s step back. Prudence is caution. “Know before you go.” Before we take the next big potentially dangerous step, let’s stop and think and do some checking to see if we are on the right track. There is a classic articulation of that called “The Precautionary Principle”. It is a philosophical scientific principle that says: Any particular technology should not be developed – or at least not released into the environment and used – until it has been proven to be non-toxic and not dangerous. This is a very high standard. This is like a technology is guilty until proven innocent, instead of innocent until proven guilty. It’s based on the recognition that with slight changes in a complex system, massive disaster could happen – what’s called “the butterfly effect”.

In 2000, Bill Joy, a tech guru who was one of the co-creators of Java, wrote an article entitled “Why the Future Doesn’t Need Us”. In it he talked about how, in the next few decades (probably unpredictably), through developments in nanotechnology, biotechnology, robotics, and computing power, we will develop the capacity to create self-replicating entities – viruses, nanorobots, etc. – able to harm us or the environment to such an extent that human extinction will become inevitable. These self-replicating entities could be toxic or consume things we need on a massive scale. The most important feature of this dire prediction is that we will generate the capacity for individuals or small groups to create such entities, on purpose or by accident. It won’t be limited to big organizations and governments.

The breakthrough development of Crispr a few years ago was only one of a number of new developments that make humans even more powerful in ways that are straight out of Bill Joy’s prediction. Crispr and its cousins make genetic engineering really easy. Somebody with a basic understanding of college-level biology and $10,000 of equipment can start fiddling with microorganisms and create something by accident or because they are insane or they have dire aims for humanity – or simply because they didn’t notice or seriously consider a “side effect” of their otherwise well-intentioned innovation. Since we’re talking about a self-replicating entity, once you let it out into the environment, it will self-replicate.

Anybody being able to create self-replicating entities of any kind is an obvious formula for losing all control of humanity’s destiny.

This issue is, of course, very hot. But what do we do? Many would say: “But you can’t stop science! Science is really important! It produces medical breakthroughs! We can feed more people!” and so on. This is all true, but there’s a bigger reality involved here.

Video Introduction (19 min)

Examples and Resources

- Cynefin Framework

Link-Atlee essay

Link-Wikpedia - Change Here Now – a permaculture pattern language

pp. 43-46, 50-52, 60-67, 98-100 - Global Catastrophic Risk – Link-Wikipedia

- Risk Management – Link-Wikipedia

- Sustainability and risk management

- The precautionary principle

Link-Wikipedia - Full cost accounting

Link-Wikipedia - Environmental impact statements

Link-Wikipedia - Technological warnings

Link-Wired

Link-Ideas.ted.com

Link-CII

Link-Wikipedia - Drawdown Climate Solutions Link

- The Amish and Technology

- On Humility

- Resilient Zone

- Using Diversity and Disturbance Creatively – Pattern Link

- Overshoot (book) Link

A key example of monitoring appropriate innovation is the precautionary principle. It says that technology should not be applied in any broad or potentially risky way until it is proven benign. The precautionary principle is an extremely conservative one, very different from the progressive principle that says we are and always should be developing and advancing. Everything is up and up and up all the time which is our civilization’s bias at the moment. So the precautionary principle is understandably resisted by ambitious technologists. And it’s actually very hard to apply in a broadly collective way. If the U.S. adopted the precautionary principle, what about the Chinese, what about a terrorist network? How do you get the precautionary principle applied everywhere?

That question should not be seen as a rhetorical question. It should be seen as a real question that demands some creative answers: “Okay, how do we do this clearly necessary thing?”

Full Cost Accounting is another one of the patterns, very relevant here. Let’s not just look at the upsides of our developing technologies. We have this brilliant ability to make these tiny robots which can go around inside us and kill cancer cells. Okay, so if you can do that, you should be able to create tiny little robots that go around inside us and kill brain cells or heart cells. The wrong person having this technology would have very troubling capacities in their hands. So do we want to go there and look at the full cost accounting when it comes to any new technology? If we thought in terms of full cost accounting, I suspect we would more often apply the precautionary principle.

An existing protocol which is very much along these lines – albeit quite mildly, given the way it is usually applied – is environmental impact statements (EIS). Essentially an EIS asks “If you are going to do this new development project or create this new technology, what is the environmental impact of that going to be?” Unfortunately, it’s a corrupted system in practice. But the idea behind it is much in line with this particular pattern.

Another great resource for an already extensive list of resources (it includes six forms of long-term thinking and six forms of short-term thinking, among many other things): https://medium.com/the-long-now-foundation/six-ways-to-think-long-term-da373b3377a4

Crispr is a fabulous enabling, terrifying adventure, as is AI. We’re working right now with a group that runs AI strategy workshops for non-technical teams, and while they work to ground these strategies in human-centered and ethical thinking, the reality is that the group’s enthusiasm for the possible advances/profits/ease of use always dominates the discussion.

I’m curious about the choice of the Cynefin framework here as the pattern picture. In my encounters with that framework in action, it’s used to find the most viable path forward – but the assumption of a path forward persists. From the essay, however, I read a stronger call to question whether this “forward” should be pursued at all.

Finally, would you consider concepts like the triple bottom line related here? Or would that fit better in another pattern?

Thanks, Elise. Crispr and AI are, indeed, perfect examples. And the tendency to focus on the positive side (both from profitability and narrow human benefit perspectives) tends to overshadow both the longterm (Deep Time Perspective) and broad view (Systems Thinking), which becomes extremely dangerous as our technological power, global economic interdependence and population/consumption impacts grow.

I only know Cynefin in its theory, its fundamental insight(s). I don’t know of its forward progress application. Interesting. Your reading of my essay is correct. We need more broader and deeper and less forwardness…

Triple Bottom Line is appropriate here, but even more so at Full Cost Accounting (where I was surprised to find it not in the Examples and Resources list). I’ve logged it for adding to both pattern pages. Thanks for that.